Event contracts used to be guidance. With agents on the other end, they're the thing the system actually runs on.

Humans squint. Agents don't.

I dropped that line at the end of the last piece almost as a throwaway. The more I sit with it, the more it looks like the whole argument compressed into four words.

For the last twenty years, the consumers of enterprise data have been human beings, or systems built by human beings who were in the loop. They were tolerant. A field named amount that sometimes meant gross and sometimes net was fine, because an analyst would notice, frown, check with someone, annotate the dashboard, and move on. A timestamp that was sometimes UTC and sometimes local was fine, because somebody caught it in review. A status field with four official values and six unofficial ones was fine, because the business knew which ones actually mattered.

All of that forgiveness was invisible. We never wrote it down, because nobody ever had to. It lived in people's heads, in Slack threads, in the quiet rework that senior engineers did before a report went out.

Agents don't have any of that. When an agent consumes an event, it takes the schema at face value. It acts. It emits new events. It triggers workflows. And it does all of this in seconds, across the whole organization, at a volume humans never could. If the semantics are wrong, the mistake happens faster, wider, and more confidently than any human would have made it.

That's the shift. It isn't that agents are worse consumers than humans. In some ways they're better: more consistent, never tired, never losing focus. It's that they expose every place the contract was fuzzy and the humans were quietly fixing it.

The contract becomes the runtime

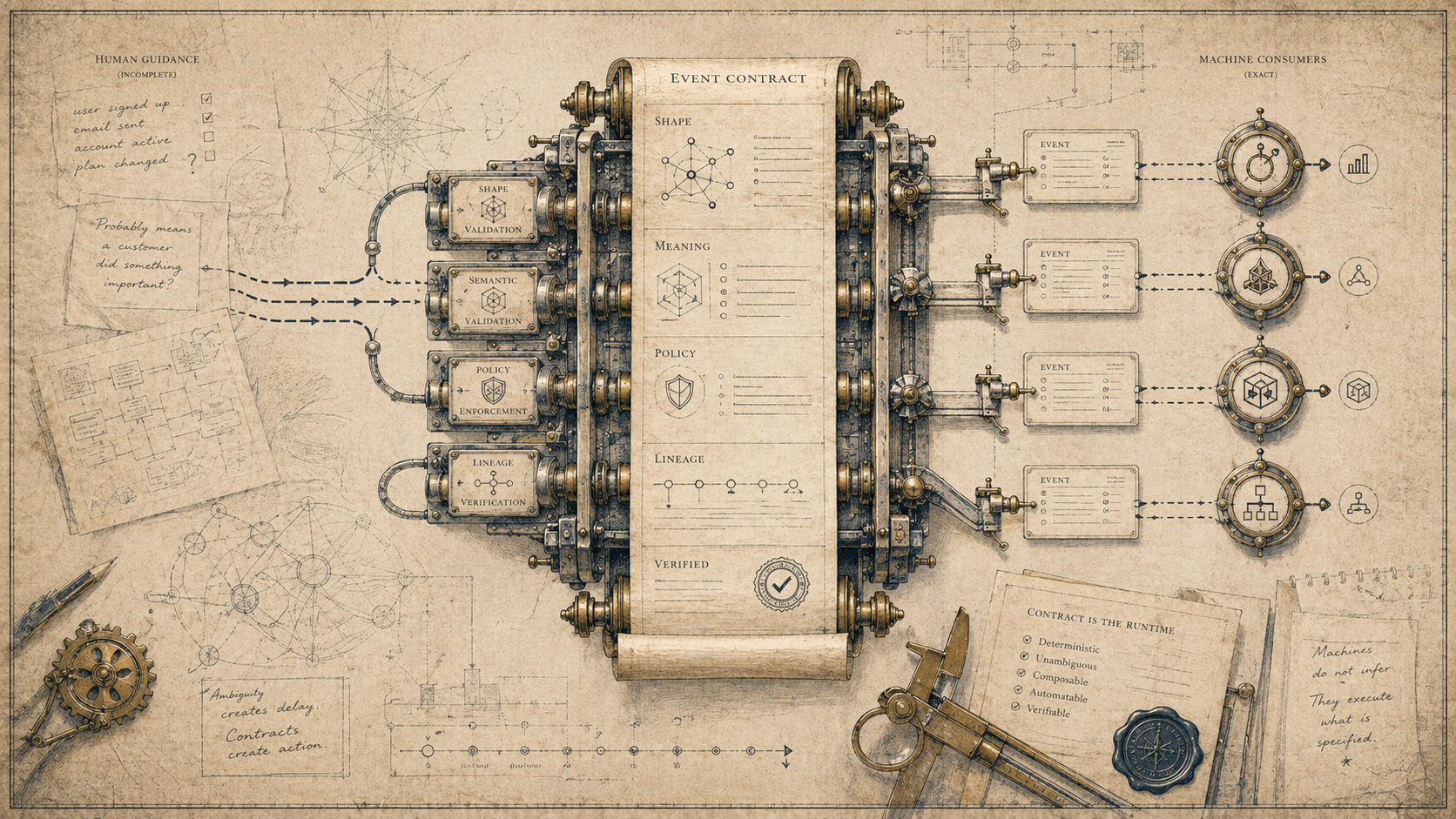

When I say "event contract" here, I don't just mean a schema registry entry.

A real event contract carries four things. There's shape, the bit we already sort of do: fields, types, what's required. There's meaning in business terms, the part that mostly lives in wikis or in nobody's head. Is amount gross or net? Is timestamp UTC or local? Does customer_id mean the billing account or the user profile? Then there's policy: who can produce the event, who can consume it, what happens when validation fails, what personal data is in it. And finally lineage: where this event came from and what depends on it downstream.

In a human-consumer world, only the first one was really enforced by the system. The other three lived in wikis, in heads, or nowhere at all. Everything after the schema was aspirational.

In a machine-consumer world, all four have to be enforced by the platform, because nothing else is going to catch the mistake. The contract stops being documentation and becomes a runtime object. It's checked when the event is produced. It travels with the data to the consumer. It decides, in code, what to do when something is off.

That's a much heavier thing than a schema registry. It's closer to an API, except the API is the event itself, and the surface area is every producer and consumer in the organization.

What actually breaks

Let me make this concrete, because the abstraction can hide how painful this gets in practice.

A few months back I watched this happen on a real platform. There was an event called order.completed. It had a schema. The schema had been stable for three years. Everyone trusted it. Then somebody pointed an agent at it to send the "your order is on its way" kind of notification.

The agent was polite, fast, and wrong about a quarter of the time. order.completed was emitted when the order left the warehouse, not when it arrived at the customer's door. Humans consuming it had always known that. The field was called completed because, internally, completion meant completion from the warehouse's perspective, and that's who owned the event.

The agent didn't know. The agent trusted the name. And because the name was load-bearing inside the agent's reasoning, it built workflows, ran them, and sent thousands of premature notifications before anyone noticed.

The fix wasn't a smarter agent. The fix was a cleaner contract. order.completed got split into order.shipped and order.delivered, with explicit meaning, explicit ownership, and a deprecation window for the old event. The humans consuming it didn't need any of that. The agent did.

Multiply that across every fuzzy event in your organization. Now you can see the work.

Ownership is the hard part

The honest thing to say here is that writing the contract isn't the hard part. Most teams can write a decent schema if you make them. The hard part is that contracts need owners, and ownership has implications nobody loves.

If you own an event, you own what it means, forever, for every consumer that depends on it. You can't change its meaning silently. You can't repurpose it. You can't add a weird edge case without talking to the people downstream. Your schedule is no longer yours alone; it's coupled to everyone who subscribed.

That's an uncomfortable shift for teams that grew up shipping against their own roadmap. It's basically API ownership, but for data, and at a volume and breadth most organizations have never had to govern.

The organizations that will do this well are the ones that figure out, early, that events need product managers. Not data stewards in the old compliance sense. Actual product people, accountable for the event as a product, with consumers, versioning, a roadmap, and a deprecation policy. The event mesh from the last piece only works if someone owns each event in it.

Governance becomes code

The other thing that changes is how governance works at all.

The old model was committee-driven. A data governance council met monthly. Policies were documents. Compliance was "whose job is it to remember this by the time audit comes around." It worked, sort of, when decisions moved at human speed and data moved in batches.

It doesn't survive contact with an event mesh. When hundreds of agents are producing and consuming events in real time, you can't have a human in the loop for every policy question. The policy has to be expressed in a form the platform can enforce at event time.

PII rules live on the contract, not in a spreadsheet. An event marked as carrying personal data routes through the right masking layer automatically, and consumers without the right capability simply don't see it. The same goes for retention: an event with a 30-day window expires after 30 days, and any consumer that tried to hold it longer fails its own contract. Access works the same way. "Only these teams can consume this event" becomes a line of configuration, not a Slack thread. And changes to the contract itself follow a codified process, with automated impact analysis on every downstream consumer and automated deprecation flows for any producer trying to break the old shape.

None of this is optional once agents are your majority consumer, because agents won't remember what the committee decided. They'll just do whatever the contract says.

Governance stops being a separate function and becomes a property of the platform. "Policy as code" finally stops being a slogan and starts being the only way the thing runs.

The semantic layer, promoted

People have been talking about the semantic layer for years. Metric definitions. Business glossaries. The headless BI idea. It's always been a good idea, and it's always been something the organization could live without.

Not anymore. The semantic layer stops being a BI concern and becomes the substrate every agent in the business is standing on. When an agent asks "what was our revenue last quarter," it doesn't want ten numbers from ten systems. It wants one number from one trusted definition, and it wants that definition machine-readable, so it knows what it actually asked for.

The effect is that the semantic layer ends up living next to the events themselves, with the same ownership, versioning, and governance rules. Every metric, every dimension, every definition gets the same rigour as an event does.

This is going to be a quiet but significant architectural decision for a lot of organizations in the next few years. Where does the semantic layer live? Who owns it? How does it stay in sync with the events it summarizes? If you've never had to answer those questions, you're about to.

The fight ahead

I called this the next real architectural fight in the last piece, and I think it is, for a specific reason. It's the layer where the cost of getting it wrong changes phase.

Getting your database wrong costs you a rebuild. Getting your pipeline wrong costs you a refactor. Getting your contracts wrong in a machine-consumer world costs you every downstream agent acting on bad meaning, at full speed, for as long as it takes you to notice. And because agents are fast and confident, you might not notice for a while.

That's why it's a fight worth paying for now, before you have a hundred agents in production. Contracts are cheap to define up front and painful to retrofit under load. The organizations that take this seriously while the agent count is still small will quietly run laps around the ones that don't.

Same argument as always, in new clothes: clarity is infrastructure. Platforms behave like operating systems now. Events are the primitive everything else sits on top of, and contracts are where the real interface lives. The advantage in the AI era goes to the organizations that did the boring work of making their meaning legible, not just to humans, but to the machines now acting on their behalf.

In the next piece I want to flip the camera. If the machines are doing more of the work, what's actually left for the human in the loop? "Human oversight" does a lot of load-bearing work in every AI architecture diagram I see, and I'm not sure we've been honest about what it means once the system is event-driven and agent-rich.

The Conversation

Members can comment on every field note.

Subscribe to join the discussion and add your perspective to the record.