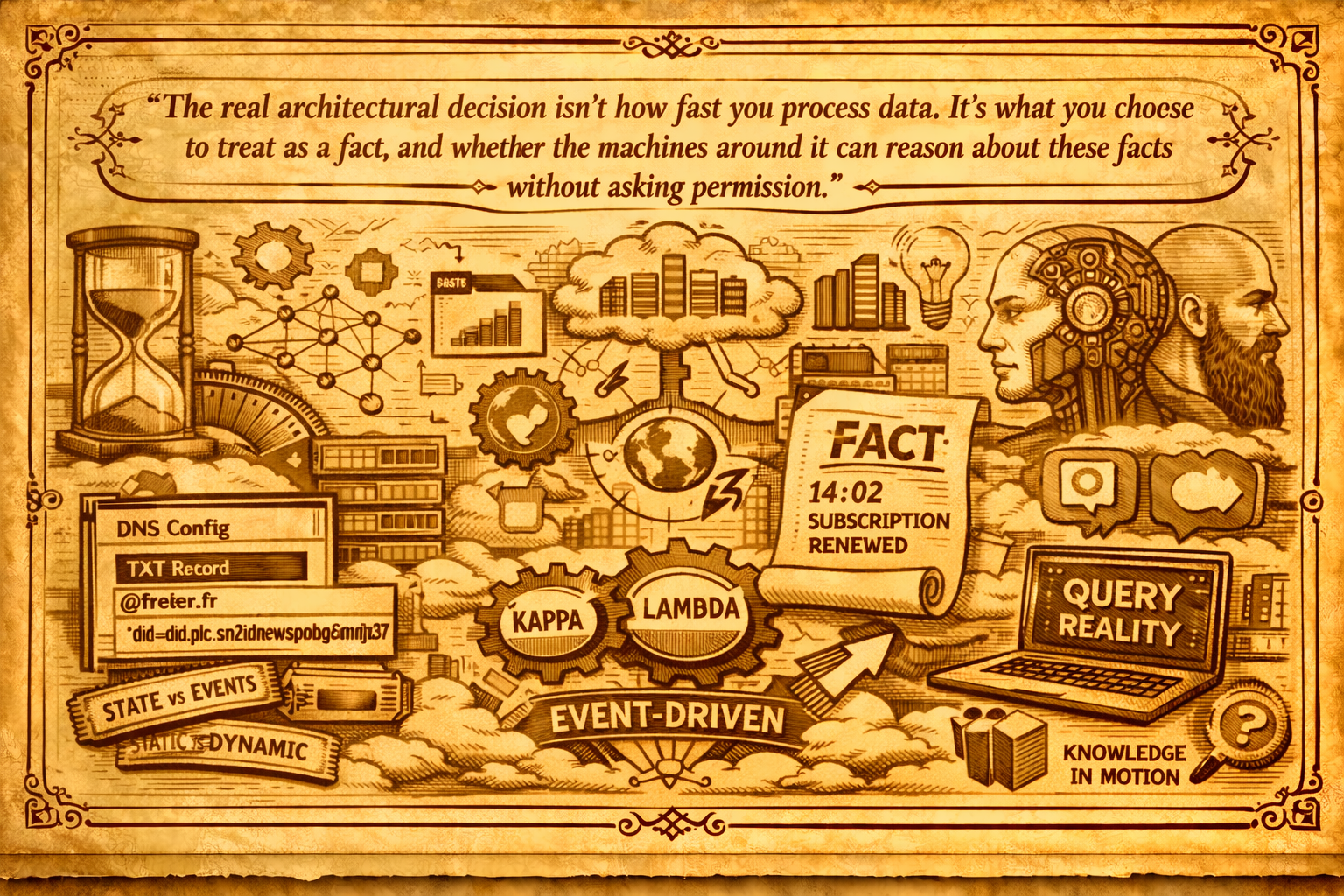

The real architectural decision isn't how fast you process data. It's what you choose to treat as a fact, and whether the machines around it can reason about those facts without asking permission.

For years, data architecture debates have circled the same axis: batch or streaming. Hadoop, then Spark, then Flink. Lambda, then Kappa. Every vendor pitch and every platform rewrite eventually collapsed into one question, how fresh is fresh enough, and how much are you willing to pay for it.

I don't think that's the interesting question anymore.

It was, once. When moving a billion rows took twelve hours, the choice between overnight batch and "near-real-time" was a real trade-off. Cost, complexity, operational risk, they all scaled with freshness. You picked a cadence and you built around it.

That constraint is mostly gone now. Modern engines are fast enough. Micro-batches look a lot like streams from one side; change data capture blurs the line from the other. Most serious platforms today run both modes inside the same runtime, often inside the same query. The distinction still shows up on slides, but it doesn't do much architectural work anymore.

What's replaced it is a quieter, more consequential question: whether the organization thinks in events, or in tables.

Tables describe state. Events describe facts.

A table says the customer's status is active. That's a snapshot. A collapsed view of everything that ever happened to that customer, reduced to one current answer. Useful, but derivative.

An event says the customer's subscription was renewed at 14:02 on Tuesday, via the mobile app, with a discount code applied. That's a fact. A timestamped claim about something that happened in the world. It doesn't need to be recomputed. It doesn't go stale. You can derive any number of tables from a stream of events; the reverse only works if the table is already storing events in disguise, like an audit log, a CDC feed, or an event-sourced ledger. A plain table of current state has thrown away the order, the timing, and usually the why, and no amount of querying brings them back.

Once you see that, the old debate looks different. Batch vs. streaming was always a fight about how often to recompute the snapshot. Event-driven thinking asks something else: what are the facts we're willing to commit to, and who's allowed to act on them?

That's a modeling problem, not a latency problem.

The organization has to think in events first

Most of the platforms I see struggling to "go real-time" aren't actually struggling with the technology. They're struggling with the fact that the business has no agreed-upon vocabulary of events. Ask five people what a renewal is and you'll get five answers. Does it include trial conversions? Does it fire on payment, or on entitlement? If a failed payment is retried the next day, is that one event or two?

You can't stream what you haven't defined. And if every team defines it differently, the stream just becomes a faster kind of chaos.

So event-driven thinking isn't really a tooling upgrade. It's a discipline that forces the organization to name things. To decide, out loud, what counts as a fact and what's just an opinion. It exposes the disagreements that tables were always happy to hide under the word active.

Which is the same point I keep ending up at. AI rewards organizations with clean definitions. Platforms become operating systems when they coordinate around shared meaning. Events are the primitive both of those depend on.

What changes when you take events seriously

Ownership gets sharper. Every event has a producer, and the producer is accountable for what it means. There's no room for a downstream team to quietly redefine a metric because the upstream definition was inconvenient. The contract is the event.

The platform's job changes with it. It stops being a warehouse that ingests and transforms on a schedule. It becomes a coordinator: something that receives facts, enforces policy on them, makes them available to anyone entitled to consume them, and keeps a durable log of what the organization knows. That's operating-system behaviour.

And batch stops being an identity. You still run batch jobs, for cost, for heavy joins, for regulatory reporting. But batch becomes a consumption pattern on top of the event substrate, not a separate world with its own pipelines and its own version of the truth.

Events are how agents see clearly

This is where the argument stops being about data engineering and starts being about what the platform is for.

An agent looking at a table is doing archaeology. It sees a world that was already decided for it: status columns, flags, derived fields. Someone, somewhere, picked a moment and collapsed history into a row. The agent has to trust that collapse and work backwards from it, which is a hard thing to do well.

An agent looking at an event stream is doing something closer to observation. It sees what happened, in the order it happened, with the context attached. A payment failed. A retry succeeded. The customer opened a ticket. The ticket was assigned. The refund was authorized. Each fact stands on its own. None of them depend on a nightly job having run to aggregate them into a shape the agent can consume.

That difference is easy to underestimate until you've watched an agent try to reason about a support system from a nightly snapshot. It gets confused about whether a ticket was still open at 11:42 or was already closed. It can't tell you why a field changed. It'll happily invent a plausible reason, because that's what models do when the context is thin. Events give it the context back. They restore the order, the actors, and the time.

This is also why agents are better at reacting than at reporting. Reporting is a pull model: you ask a question, the agent queries a table, it summarizes. Reacting is a push model: something happens, an event appears, an agent that subscribed to that kind of event wakes up and does something about it. You don't need to orchestrate it. You don't need to predict which agent needs which data at which time. You publish the fact, and the right consumers decide what it means for them.

The important shift here is that an agent on an event stream is no longer a chatbot with tools. It's a participant in the system. It observes, it decides, it acts, and its actions become new events other participants can see. The platform starts to feel less like a data store and more like a shared environment for humans and machines doing work side by side.

From event stream to event mesh

Once that push model is working for one team, something interesting happens. Other teams want in. Not because they were told to. Because once a useful event exists, it tends to attract consumers.

The payments team publishes payment.failed. The comms team starts sending proactive emails. The CX team spins up an agent that looks for patterns across failures and flags suspicious merchants. Finance starts reconciling in near-real-time instead of end-of-day. Nobody coordinated this. They all just subscribed.

That's what people mean by an event mesh, though the term is doing a lot of heavy lifting. The practical reality is simpler: once events become the primary interface, the platform stops being a hub-and-spoke system with a warehouse in the middle. It becomes a fabric. Any producer can publish. Any consumer, human or otherwise, can subscribe. The platform's job is to keep the fabric consistent: the events are well-defined, the policies are enforced, the schemas are respected, nothing gets lost in transit, and every consumer sees a coherent view.

Agents thrive in that fabric. A good agent is basically a subscriber with judgment. It watches for the events it cares about, applies a policy or a model, and sometimes publishes new events of its own. If it decides a payment failure is actually fraud, it emits fraud.suspected. Another agent, somewhere else, subscribed to fraud.suspected and kicks off a review workflow. A third one notices the review was cleared and re-enables the merchant. None of these agents need to know about each other. The event mesh is what connects them.

It's worth pausing on how strange that would have looked five years ago. We would have built each of those interactions as a custom integration. A REST call here, a webhook there, a batch reconciliation at the end. Every new behaviour would have required someone to wire two systems together by hand. The event mesh collapses that work into a single primitive: publish, subscribe, respect the contract.

Composition, not orchestration

This is the quiet upgrade most people miss when they talk about agents.

The dominant mental model for multi-agent systems today is orchestration. A supervisor agent decides what to do, dispatches to specialist agents, collects their answers, decides the next step. It's the same pattern we use for workflows, pipelines, and microservice choreography. It works, up to a point, but it breaks in the usual way: the orchestrator becomes a bottleneck, a single point of failure, and a place where implicit knowledge accretes until nobody understands it anymore.

Event-driven composition flips that. There is no orchestrator. There are producers and consumers, all of them reacting to the same stream of facts. If you want a new behaviour, you don't rewire the orchestrator. You add a consumer. The system composes itself out of the event contracts. Adding an agent doesn't require anyone else to know about it. Removing one doesn't break the rest. Behaviours can run in parallel without stepping on each other, because they're not sharing a plan; they're sharing a view of what happened.

That's also, incidentally, closer to how organizations actually work. No real business runs on a single orchestrator telling every department what to do. It runs on shared facts and distributed judgment. Teams watch for things they care about, act on their piece, and emit the result back into the organization. The more your platform looks like that, the less friction you create between the way the business operates and the way the software pretends it does.

It's a small reframing, but it changes what you build. You stop designing "the agent system" as a singular thing. You start designing the events, the contracts, and the substrate, and the agents become the latest generation of consumers on top. Tomorrow's agents will be better than today's, and the substrate won't need to change.

The real question

The question an engineering leader should be asking isn't how do we move to streaming? anymore. It isn't even how do we add agents? It's: do we have a shared, durable, event-level record of what happens in our business, and does the rest of the organization, human and machine, trust it enough to act on it?

If the answer is yes, batch vs. streaming is a tooling choice and not much more, and agents are just the latest kind of subscriber on top of a substrate that was already working. If the answer is no, no amount of Flink or Kafka or GPT will fix it, because the problem was never latency and it was never models. It was agreement.

Event-driven thinking is, in the end, another version of the argument I keep coming back to in this series. The organizations that do well in the next decade won't be the ones with the most data or the fastest pipelines or the biggest AI budget. They'll be the ones that did the unglamorous work of deciding, out loud, what the facts are, and then built a substrate where humans, systems, and agents can all see those facts at the same time.

That's what an operating system actually is, when you think about it. Not a pipeline. A shared view of reality.

In the next piece, I want to look at what changes when half your consumers are machines. Event contracts were forgiving when a human was on the other end to squint at them. Agents don't squint. They act. That shifts the burden onto the schema, the semantics, and the governance around every event, and it's where I think the next real architectural fight is going to happen.

Members may comment on every article

Become a member to join the discussion and add your voice to the record.