"Human oversight" is doing a lot of work in AI architecture diagrams.

Too much, I think.

It usually shows up as a reassuring little box near the end of the flow. The model produces a recommendation. The agent proposes an action. The workflow pauses. A human reviews, approves, rejects, corrects, or escalates. The diagram moves on.

Everyone feels better.

There is a person in there. Judgment has been preserved. Accountability has a home. The system is not fully autonomous, not really, because somewhere in the machinery a human is still allowed to say no.

That is the comforting version.

The problem is that in an event-driven, agent-rich system, this idea collapses under its own vagueness. Human in the loop sounds like a control mechanism, but most of the time it is not a mechanism at all. It is a placeholder for decisions the organisation has not made yet.

Which human? In which loop? At what point? With what information? Against which policy? Under what time pressure? With what authority? And what happens if they disagree with the machine?

Until those questions have answers, "human oversight" is not an operating model. It is a drawing convention.

The loop was never the point

Part of the confusion comes from the phrase itself.

It makes you imagine a loop as a neat sequence. Something happens, the machine responds, the human checks, the system continues. That works for simple workflows. A mortgage application. A fraud alert. A content moderation queue. A generated pull request. There is a bounded object, a bounded decision, and a bounded moment where review can happen.

Modern enterprise systems are not mostly made of neat loops. They are made of streams.

A payment fails. A customer retries. A support case opens. A delivery misses its slot. A crew member signs in. A sensor emits a confidence score. A pricing rule changes. A policy threshold is crossed. A risk signal appears. An agent consumes one of these events, combines it with context, decides something, emits another event, and wakes up three other consumers that were waiting for exactly that kind of fact.

The work does not move through one loop. It moves through a fabric.

That was already true when the consumers were mostly systems built by humans. It becomes unavoidable once the consumers are agents. Agents do not wait for a dashboard. They do not open the morning report. They subscribe. They observe. They act. They produce new facts for other participants to consume.

In that world, putting a human in every loop is not cautious. It is impossible.

The volume is too high. The latency is too low. The branching factor is too wide. The number of decisions that look small locally but become meaningful in aggregate is far beyond what any group of people can inspect transaction by transaction.

So the real question is not whether humans remain involved. Of course they do. The real question is where human judgment belongs once it can no longer sit in the path of every action.

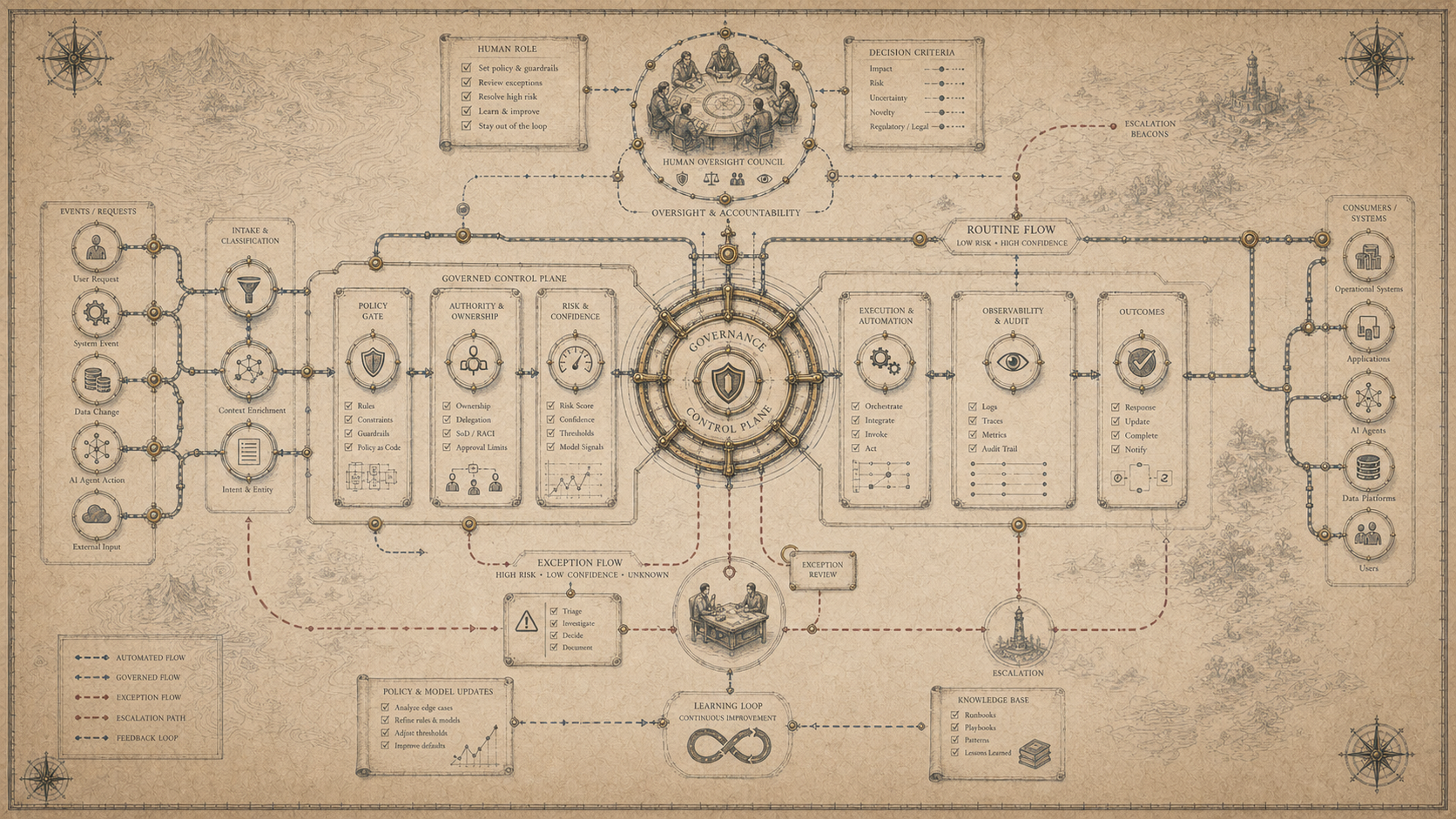

From transaction path to control plane

The answer, I think, is that humans move out of the transaction path and into the control plane.

That sounds abstract. It is a practical distinction.

The transaction path is where individual work happens. An event arrives. A rule fires. An agent summarises, recommends, routes, blocks, approves, notifies, updates, schedules, refunds, or escalates. This is the path of live operational movement. Latency matters here. Scale matters here. Automation creates leverage here.

The control plane is different. It defines what the system is allowed to do. It sets boundaries. It expresses policy. It decides which actions need approval and which do not. It defines what counts as normal, suspicious, forbidden, reversible, reportable, auditable, or exceptional. It says who owns a decision, who can change the rules, and how the organisation learns when the rules are wrong.

That is where human judgment has to become much more explicit. Not because humans are less important. Because they are too important to waste on pretending to review everything.

A human approving every low-risk action is not governance. It is latency with a face.

A human clicking approve on a queue they do not fully understand is not accountability. It is theatre.

A human asked to intervene after the system has already compressed the context, hidden the uncertainty, and framed the decision as a yes/no choice is not exercising meaningful judgment. They are being used as a liability sink.

The human belongs where judgment actually changes the shape of the system.

What should the policy be? What risk are we willing to automate? Which ambiguity should stop the flow? Which exceptions deserve human interpretation? Which actions must be reversible? Which events are allowed to trigger customer-facing behaviour? Which agent is allowed to act, and which is only allowed to recommend?

That is not the human in the loop. That is the human designing the loop.

Approval is the weakest form of oversight

The laziest version of human oversight is approval.

It is easy to draw. It is easy to explain. It maps neatly onto existing processes. A system proposes something, a human approves it, done.

Sometimes that is exactly right. High-impact decisions often need explicit approval. You probably do want a person involved before terminating employment, denying a critical service, issuing a large refund, blocking an account, grounding an aircraft, changing a regulatory submission, or taking any action where the consequences are serious, hard to reverse, or ethically sensitive.

But approval does not scale as the default pattern.

It also has a hidden failure mode. It creates the impression of control while quietly degrading the quality of judgment.

The more approvals you ask people to make, the less each approval means. Review becomes a queue. The queue becomes work. The work becomes throughput. The person approving starts optimising for the queue rather than the decision. They trust the system more than they should because most of the time the system is right enough. They stop investigating context. They approve the ordinary. They skim the unusual. Eventually the approval step becomes part of the automation it was supposed to supervise.

This is not because people are careless. It is because the design is bad.

Humans are poor at acting as rubber stamps for machine-speed systems. They are much better at handling ambiguity, setting intent, inspecting patterns, investigating exceptions, and changing the rules when the environment shifts.

That means the architecture of oversight has to be more varied than approve or reject.

Some decisions should be fully automated because the cost of human review is higher than the risk of error. Some should be automated but sampled after the fact. Some should be delayed when confidence drops below a threshold. Some should be allowed only within a bounded budget. Some should create alerts but not pause the flow. Some should require dual control. Some should be structurally impossible. And some should be escalated to humans not because they are high-value, but because they are semantically unclear.

That last category matters more than most organisations realise.

Humans handle meaning

In the previous piece, the issue was contracts. When the consumer is a machine, the contract becomes the runtime. Shape, meaning, policy, and lineage can no longer live informally in people's heads, because agents act on whatever the system makes legible.

The same is true of oversight.

Humans are not there to slow the machine down. They are there to handle meaning where meaning cannot yet be safely encoded.

That is a different job.

A human should not be asked to approve a refund because the system cannot add two numbers together. That is automation failure. A human should be asked to intervene when the refund is mechanically valid but contextually strange.

The customer is entitled to compensation, but the delay was caused by a weather event. The policy allows a goodwill payment, but the customer has received six in the last month. The disruption was operationally minor, but the customer is vulnerable. The agent has found a rule that applies, but two policies point in opposite directions. The event contract says case.resolved, but the customer has replied again with evidence that changes the situation.

That is human territory.

Not because humans are magical, but because organisations have not made all of their judgment explicit and probably never will. There will always be edge cases where the written rule meets the messy world. There will always be decisions where fairness, commercial intent, regulation, customer experience, operational reality, and reputational risk are all present at once.

Those are not loops to automate blindly. They are places where the system should slow down on purpose.

To do that well, the platform has to know when it is approaching the edge of its own understanding. That means uncertainty cannot be hidden inside a model response. It has to become part of the event fabric. Confidence, policy matches, failed assumptions, missing context, and disagreement between sources all need to be visible as first-class signals.

A system that cannot explain why it escalated is not much better than a system that never escalates.

Exception handling is a design discipline

A lot of organisations treat exceptions as operational leftovers.

The happy path gets designed. The automation gets built. The exceptions get thrown into a queue somewhere, usually with less context than the machine had when it got confused.

That is backwards.

In an agent-rich system, exception handling is one of the most important parts of the architecture.

The exception path is where you discover what your system does not understand yet. It is where you find weak definitions, missing policies, stale contracts, ambiguous ownership, bad thresholds, and places where the real business has drifted away from the documented one.

Every exception is not just a task. It is feedback.

If a human has to make the same judgment fifty times, the organisation has learned something. Either the policy should be encoded, the contract should be clarified, the agent should be constrained, the upstream event should be split, or the business should admit that the ambiguity is real and design a better escalation model around it.

This is where human oversight becomes valuable. Not as a queue of approvals. As a learning system.

The human resolves the case. The resolution becomes an event. The reason for the resolution is captured. The policy impact is reviewed. The contract is updated. The agent behaviour changes. The future exception rate moves.

That is oversight with memory.

Without that loop, human intervention becomes waste. The same problems return. The same decisions are made manually. The same ambiguity survives. The organisation pays for human judgment but fails to convert it into system improvement.

A mature platform should treat exceptions the way a good engineering organisation treats incidents. Not as embarrassing interruptions. As evidence.

Accountability needs somewhere to live

There is another reason the phrase human in the loop is so attractive.

It appears to solve accountability.

If a machine does something wrong, surely the accountable person is the one who approved it. Or the owner of the workflow. Or the team that deployed the agent. Or the business function that asked for the automation. Or the platform team that provided the runtime. Or the governance forum that signed off the policy.

This gets muddy quickly. And the muddiness is dangerous.

When systems were slower and more manual, accountability could often remain informal. People knew who owned what, more or less. Escalations followed relationships. Exceptions were handled by experienced operators. The organisation relied on memory, judgment, and a certain amount of social routing.

Agentic systems put pressure on that model.

If an agent consumes an event, applies a policy, takes an action, and emits a new event consumed by another agent, where does accountability sit?

It cannot sit inside the model. It cannot sit vaguely with "the business." It cannot sit with a human approver who was handed a framed decision with limited context.

Accountability has to attach to the control plane.

There should be an owner for the policy. An owner for the event. An owner for the agent capability. An owner for the action type. An owner for the exception process. An owner for the customer or operational outcome the system is trying to influence.

That sounds bureaucratic until something goes wrong. Then it is the difference between a system that can learn and a system that can only blame.

Ownership is what lets the organisation change the right thing. If the event meaning was wrong, fix the contract. If the policy was wrong, fix the policy. If the agent exceeded its authority, fix the capability boundary. If the human approval step lacked context, fix the review interface. If no one knows which of those happened, the oversight model was never real. It was decoration.

Observability becomes a human interface

This is also why observability matters differently in AI systems.

Traditional observability tells us whether the system is healthy. Are services up? Are messages flowing? Are errors increasing? Is latency acceptable? Are resources exhausted?

That still matters. But in agent-rich systems, we also need decision observability.

What did the agent see? Which events did it consume? Which contract version applied? Which policy was evaluated? Which tool was called? Which action was taken? What confidence or uncertainty was present? What alternatives were considered? What was escalated, suppressed, retried, or ignored? What changed downstream as a result?

Without that, humans cannot supervise the system in any meaningful way. They can only inspect outcomes after the fact and try to reconstruct the path from fragments.

That is not oversight. That is archaeology.

A good human control plane needs the system to produce traces of judgment, not just traces of execution. It needs to make machine behaviour legible enough that a person can challenge it, tune it, stop it, or defend it.

This is where architecture and governance become the same conversation.

The review screen is not the oversight mechanism. The audit log is not the oversight mechanism. The policy document is not the oversight mechanism.

The mechanism is the combination of event contracts, policy enforcement, identity, lineage, observability, escalation, ownership, and change control working together as part of the runtime.

That is what has to replace the little box labelled human approval.

The new operating model

So what should organisations actually design?

The starting point is a simple classification. For each agentic workflow, determine the level of human involvement it requires.

There are actions the system can take autonomously because they are low-risk, reversible, and well-bounded.

There are actions the system can take autonomously within limits, where humans set budgets, thresholds, and policy constraints rather than approving each transaction.

There are actions the system can recommend but not execute.

There are actions that require explicit approval because the impact is high or the decision is ethically, legally, commercially, or operationally sensitive.

There are actions that should never be available to the agent at all.

And there are ambiguous cases where the right answer is not approval, but interpretation.

That classification should not live in a slide deck. It should live in the platform. It should be expressed as policy. It should be versioned. It should be tested. It should be observable. It should be owned.

Then the human roles become clearer.

Some humans are policy owners. They decide what the system is allowed to do. Some are domain owners. They decide what events and concepts mean. Some are exception handlers. They resolve cases the system cannot safely interpret. Some are reviewers. They inspect samples and patterns to detect drift. Some are incident responders. They intervene when the system behaves unexpectedly. Some are auditors. They examine whether the system acted within its declared boundaries. Some are designers of the environment itself.

That is a much richer model than human in the loop.

It is also harder to implement, which is why people avoid naming it. But the difficulty is the point. The phrase human in the loop is popular because it lets everyone postpone the operating model. It gives the appearance of safety without forcing the organisation to say how judgment, accountability, policy, and intervention actually work.

AI will not let that vagueness survive for long.

The hard part is not keeping humans involved

The hard part is deciding what humans are for.

That is an uncomfortable sentence, but it is where the architecture is heading.

If humans are there to compensate for bad contracts, the system will not scale. If humans are there to rubber-stamp machine decisions, the oversight will decay. If humans are there to absorb accountability without authority, the organisation will create risk in a more polite form. If humans are there to catch every possible mistake, the system will drown them.

The better answer is more disciplined.

Humans define intent. Humans own meaning. Humans set boundaries. Humans handle ambiguity. Humans inspect patterns. Humans respond to exceptions. Humans change the system when reality changes.

The machines can do more of the work, but the work still needs a theory of control. It needs a way to distinguish normal action from exceptional action, confidence from uncertainty, automation from authority, and execution from judgment.

That theory cannot be added at the end. It has to be designed into the platform.

The organisations that do this well will not talk about human oversight as a vague safety blanket. They will know exactly where human judgment enters the system, what information it receives, what authority it has, how its decisions are captured, and how those decisions improve the runtime over time.

They will not put humans in every loop. They will build systems where humans govern the loops.

That is the next maturity step.

Clarity was infrastructure. Contracts became runtime. Events became the primitive. Governance became code. Now judgment has to become architecture.

Once you see that, the question changes.

It is no longer: where do we put the human?

It is: what kind of human judgment does this system require, and have we designed a place for it to matter?

In the next piece, I want to go one layer deeper into accountability. If agents act on behalf of teams, policies, and platforms, who is responsible when the action is wrong but the system behaved exactly as designed?

The Conversation

Members can comment on every field note.

Subscribe to join the discussion and add your perspective to the record.